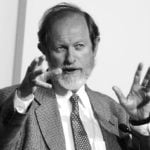

Roger Clarke

Jordan Brown interviews Roger Clarke, for the documentary film project that would eventually become Stare Into The Lights My Pretties. They speak about Google, surveillance, privacy, and societal issues.

Roger Clarke is a longstanding public-interest advocate in relation to information privacy, and is the chair of the Australian Privacy Foundation. He is also a senior Information Systems academic at the Australian National University in Canberra.

Jordan Brown: Does dominance in search present a risk to diversity of information?

Roger Clarke: Yes there are concerns about what the impact is going to be about Google having as much market share as it has. We’ve seen this before in the IT industry; we saw it with IBM, we’ve seen it and still see it with Microsoft in particular areas. Google’s base is a search engine. Its search engine isn’t all that much ahead of the pack; it has some advantages in some disadvantages compared to the others. It’s been—like Microsoft before it—a major success in marketing terms and its competitors have simply got to compete and compete more effectively. Now, if Google does succeed in sustaining the dominance that it’s got in Australia and in some other countries (not everywhere), if it succeeds in sustaining that dominance, it does have the potential to leverage off that in order to become the primary mechanism for discovery and potentially therefore the primary channel for distribution of content. Now that by itself is a bit of a cause for concern; the biggest concerns are, however, if it gets the stage of being able to interlink, to cross-leverage, between its discovery channel and its control of content. So if it becomes also the primary repository for materials, that’s a much bigger issue than if it’s the primary channel for discovery of materials.

Jordan Brown: We’ve seen that sort of thing happen with Google News and YouTube with the repository; do you see them moving from the ‘indexing of the content’ to the ‘housing of the content’ too?

Roger Clarke: Yes, they’ve shown interest in several different ways in getting control of content as well as control of discovery. It’s not universal, examples being obviously YouTube, but there’s also the Google books venture—which has got its positive features, as have many other things that Google does—but in the event that Google succeeds in gaining a stranglehold on particular content—in this case, old content of out of print books held in libraries—if it gains a stranglehold on those sorts of areas then its in a position to dominate content as well as channel. There’s a few other possible ways in which it may have real interests. Mail is one of them. Gmail is an enormous threat and is one of the worst features of Google’s offerings, because people volunteer their correspondence—not just what they write, but what they receive from other people—into Google’s archives; and that is very probably the sole long-term archive of a great deal of email that is trafficking around the world at the moment; that’s a really serious threat. In addition, some of their more recent initiatives like Google Wave, some of their initiatives in ‘cloud computing’ so-called, where people are supposed to not only use software that’s running on other people’s computers—especially Google’s—but also store their data on other people’s computers—especially Google. That becomes a serious concern if the terms and conditions are such that Google gets access to that data. So there are many different threads in the things that Google is doing which relate to content, not just to discovery.

Jordan Brown: Can we talk about Gmail a little bit more; your concerns with Gmail?

Roger Clarke: There are many ISPs and many web-mail service providers who are somewhat different beasts, offer slightly different services. There are many of them who offer some kind of archival. Bit that archival is strictly under the control of the individual, it’s not claimed as being something that the service provider has an interest in. In Google’s case, it’s completely different. Unlike Yahoo!, unlike a Hotmail, the Gmail service is all about the archive sitting on Google’s disks as long as Google decides it should be there and available to Google for use. That’s a very different environment. And remember that mail is two sided—it’s not just a case of each individual who chooses to use that service having their mail (that is to say the emails that they capture) stored in that database—everything that is sent to those individual’s or indeed everything that is sent to those individuals other email addresses in which the individual chooses the flush through Gmail, which quite a lot of people seem to be doing—all of that mail from the rest of us gets caught up in this archive. Now that is a huge amount of information about the behaviour, the interests, and the attitudes of a huge number of people.

Jordan Brown: What is Google doing with the data that it’s retaining from Gmail in particular?

Roger Clarke: Well, we don’t know of course. Because Google isn’t going to tell the likes of you and me what they do with the data that they’ve got. It is clear that they’re using it for the purposes of, I’d call it consumer manipulation but of course they’d call it ‘marketing services’. They are targeting the ads that appear on people’s screens based on the profile that they’ve developed about that individual. And that profile reflects all the different sources that they’ve got—your search terms, the mail that’s passing through, the places that you visit by clicking through from a Google page. So there’s a great deal of information they have about people’s attitudes. So that’s the first and upfront one; they are essentially an advertising organisation, that’s what their revenue stream is from, and they want to know more about you, they want to know everything about you so they can target their ads well. But when you’ve got a massive amount of data sitting there like that, you’d be mad if you didn’t look for other alternatives, other opportunities to monetise the asset that you’ve got—that’s actually an obligation that company directors have at law. Now the sorts of things that other organisations might like to do with it—with all that data—are many and varied. Lots of organisations are interested in consumer profiles, lots of organisations are interested in citizen profiles. We think about foreign governments in less free countries, but indeed governments in relatively free countries are also interested in profiling people. So government agencies as well as corporations will be willing buyers, are coughing up lots of money as Google decides that it is going to look for additional sources of revenue from its massive archive.

Jordan Brown: Maybe we should explain the way they’re profiling a bit more.

Roger Clarke: Well, there’s a couple of approaches to profiling, a couple of different uses of the word. One is to say, “Here is all the data I’ve got about a particular person, let’s summarise that, let’s cut it down to something simple—and so that this person is a person in their 20s, they have particular preferences for the following brands, they’ve got interests in the following sports, or no interest in sport at all—so a summary, a profile of a person.” That’s one use of the term. A second use of the term is to produce abstract profiles, and a typical example here is the abstract profile of a drug courier. Now clearly a drug courier will probably be flying back in from Thailand, probably more than once; and will be of a particular age and quite possibly of a particular apparent lack of a regular job—there’s all sorts of things that can be identified in particular extract profiles of a pattern of behaviour of a of a kind of person. Now, in the event that an organisation has established a profile like that—and obviously in this case for good reasons—then if you’ve got enough data you can run of the archive of data about many many people against that abstract profile and identify suspects. So those are the two different kinds of uses of the word ‘profiling.’ Now, profiling in itself of course is not evil; there are perfectly good reasons to do profiling; what we’re concerned about is unregulated use of data, and unregulated of uses of profiling which are harmful of the interests of consumers and citizens; and there’s enormous scope for that in the kinds of archival build-up that Google is doing.

Roger Clarke: When you’ve got a vast archive of highly disparate data that’s come from a lot of different sources, it’s not so simple to draw conclusions from it. You’ve got to apply some fairly smart processing. It’s one thing when you’ve got nice simple rows and columns of data—the kinds of things that government organisations and business have been running for the last 30 or 40 years, where we’ve pre-captured the data from transactions and we know what each item of data is. Data mining is used where you’ve got a lot of blobs of heterogeneous data—data that is organised very differently, particularly textual data; text and indeed voice. In those circumstances you’ve got to do fuzzy matching—even identifying that it is the individual that’s being referred to may be tricky because of the way in which an email refers to a person; it can be via pronouns (‘I’ and ‘you’ and ‘them’ and ‘us’) and that requires some reasonably advanced processing the of English language—or indeed other foreign language structures and syntaxes, in order to draw proper inferences. Now of course, all data mining is based on some degree of uncertainty and fuzziness. It’s not pulling out truth, it’s pulling out probabilities. It’s looking for, “Well there’s been lots of circumstances in which this person has been referred to in the same context as that person from dare-we-say-it-right-now Somalia—or some other place that is currently out of vogue; that is currently associated the public mind or the investigator’s mind—with some kind of wrong-doing.” There’s all sorts of fuzziness about these kinds of associations. So data mining produces uncertain results. Across very large populations, it might well lead to some quite useful information—about the behaviour of consumers in supermarkets; about the attitudes of the public towards particular events; information about how quickly information ripples through a society when viral marketing campaigns are conducted—there’s all sorts of generic stuff like that where the probabilities are fine, it doesn’t matter that it’s fuzzy information, it doesn’t do great harm to individuals. What is a concern is when that kind of fuzziness and that kind of data mining technique is applied in ways that directly affect individuals … those techniques combined with the size and the variety of the growing Google archive, these are the sources of concern.

Jordan Brown: How is Google data mining? What are they doing?

Roger Clarke: No idea. Once again, Google isn’t going to tell you that kind of thing, and if they do, it’s the marketing and PR department that’s going to be telling you, not the people who are actually doing it. So the scope for both confusion and uncertainty and mistranslation between dialects of English; and also the desire of marketing and PR people to send good messages and remain on message—all of those things mean that we aren’t going to find out. It would be a surprise if Google weren’t using data mining because they’ve got this large archive and they have said that they are using this for targeting advertising; well they’ve got to use some kinds of data mining techniques to do that. But no, simply, they’re not going to tell us much.

Roger Clarke: What I think is apparent—to keep this reasonably simple, ‘cause they’re doing some fairly complicated things, but to keep this reasonably simple—if you look at the keywords that you use when you do searches which of course they gain access to because you disclose those keywords to Google when you request the service from them. If you use a term once in three years, it’s not necessarily providing anybody with a great deal of information except that you asked about that once. But if you use that and related terms, things that form a cluster, and you use them fairly frequently—particularly if you use them around the time of a promotional campaign and they relate to the thing being promoted—then it indicates something about you. So it would be natural for Google to be searching for those kinds of clusters in your own behaviour and in relation to advertising campaigns and in relation to individual ads that Google may have popped up on your screen recently. So there’s a range of ways in which various kinds of general data mining techniques could be relatively logically applied. Now Google have been working on this for a long time; Google hire bright PhD graduates from smart universities; so staying at this level of generality and this level of simplicity isn’t good enough, we’ve got to assume that Google’s a bit ahead of the game I’m talking about here.

Jordan Brown: … Considering the types of personal information that you do disclose, I want to get to the discussion of privacy, and the notion of contributing voluntarily to that profile. What are the privacy risks in using a diverse range of services with one company?

Roger Clarke: The example that I give about the search terms that you use and the amount that you disclose about your interests and what you’re interested in, when; is only part of the story, because the way Google has structured things you’re disclosing a lot more to them than just your search terms. When you use Google you’re commonly using it from the same IP address, from the same device, from the same browser; you’re also identifying yourself in many circumstances because you’ve logged in, and you’ve logged-in in the way Google have structured it, using your email address. Now because of that, they’ve got several ways in which they can correlate the various things you do across various services. So they know that the mail that you send is from the same person as the person who use those search terms. They know that the profile that you might have on Orkut—their social networking service—is the same profile, the same individual as those search terms. They know of course that the click-throughs from the search you did are the same person. They could be wrong sometimes, because there might be a swap between yourself and your partner on the screen but mostly they’re right, mostly it’s you. Now they’ve used that trick across all of the services that they produce, and each of the new services that they come along with. So, if you become a Google Wave devotee in a years time when that service may become available, you are once again making available to them not just a bunch of data associated with Google Wave, but a bunch of data associated with you in Google Wave combined across with all the data associated with you in your search terms and in your Gmail. All of these things are correlated with one another. So the data mining isn’t being done just across individual sets, it’s being done across the sets. And that’s a much much richer insight into what you are, what you’re interested in, what sorts of responses you have to what sorts of stimuli in particular Google ads.

Jordan Brown: What is the main concern with that?

Roger Clarke: These uses of data that Google’s doing are for a particular purpose. They’re for manipulation of our behaviour as consumers. Now, consumers believe that on the whole, their operating with their own free will; that they are seeing advertisements as they go by, and particularly in the mass-marketing era they’re seeing those ads fairly passively—on billboards, they’re seeing them flash past them on TV screens; they’ve been targeted, but only at a very broad category of people, they haven’t been targeted specifically at you as an individual. But as we’re reaching the stage that those ads are being selected and even customised to suit what the advertiser knows about you through Google’s archive, your freedom of choice is becoming mythical; your freedom and choice is being removed because you’re being manipulated. We’ve talked about brainwashing in the past, it’s a bit of a strong expression, but the degree of influence over what you’re doing is greatly increased by a skilful advertiser with all that data at their disposal. Now, bear in mind of course, it goes beyond that because that advertising is being done on behalf of other organisations—Google is seldom advertising its own services, it’s advertising other people’s services or helping them advertise their services through their infrastructure. And beyond that, that archive that they have available, that profile of you that they have available, is of course available for sale; available for exchange with strategic partners for sale, to customers; for sale to government who have an interest. Clearly we think about the unfree governments—China being a good example, because Google is very active in China—and we really should feel uncomfortable about this kind of profile information falling into the hands of unfriendly governments. So there’s a series of layers of concerns starting with the consumer manipulation and moving further.

Jordan Brown: I want to take that into the personalisation of experience. Do you think that motive of consumer manipulation is part of the personalisation experience?

Roger Clarke: There’s no doubt that there’s been a change in the last 15 to 20 years in the flexibility of displays on the screens that we’ve got. There’s had to be because the screens vary so much. Screen size, screen dimensions—the shape, the length versus width/orientation issue—that’s changed so much there’s had to be a lot of new tricks developed in order to display things appropriately on PDAs, mobile phones, PCs, widescreen PCs, TV screens, and so on. Now part of that technology has involved also the ability to change the colour palette, to change the size of text, to change the font in which text is displayed—we’ve been doing that for 15 to 20 years, improving the sophistication that’s available. Now that can be used for a variety of reasons. One very good reason can be because a person’s got impaired vision and needs large text and doesn’t want serif fonts with little bits hanging off it looking nice, they want nice clear straightforward letters. So there’s some very positive reasons for these things, but all that new power provides a skilled projector of information with an ability to match the display to the particular person’s interests, and not necessarily for the benefit of the person, but quite possibly for the benefit of the person doing the displaying. So I think we are moving towards a stage where there’s a great deal more scope than there used to be in the past to manipulate the display for that individual not just on the basis of, “he or she is a consumer, put it on their screen,” but “this is a particular consumer with a particular identity and a known profile, let’s vary it this way because we know from past experience that that will have a greater impact on that person.” So I think the scope the consumer manipulation is very considerable. Now bear in mind here with all of the negatives that I talk about, we’ve got to look at the other side of it. If we as consumers think we’ve got that under control, if we think we can choose the font size, the colour palette—we can have it a bit muted in the morning and a bit brighter in the afternoon when we’re awake—if we think we’ve got it under control like that then we see that as a positive benefit to ourselves. The concern that I’m expressing is where that control is not in the hands of the individual, but where it’s in the hands of the advertiser and particularly where the individual isn’t prepared for that, doesn’t realise that, and doesn’t have natural defences built in, natural scepticism learnt all the way through school and through their behaviour as a consumer. So we really do need to up our own scepticism about the things that we see on screens in this new environment that Google and others are bringing to us.

Jordan Brown: Do you think that that [manipulation] stems to content, not just the display of content?

Roger Clarke: Yes, there’s a lot of concern about whether the ‘precedents’ (which is the word they tend to use in search engine lingo), the sequencing in which hits are presented to you on screen, whether that is being designed to reflect your needs or whether its been designed to reflect the interests of marketers or Google. We’ve seen some compromise to this in that sponsored links are being inserted either at the top or down the side. Now the reaction of privacy advocates to that has been, “well how far you go with this is an issue because of course it’s intruding, but the most critical issue is that it be clear which ones are sponsored links, which ones we would like to push in front of you; and which ones are the results of a ‘neutral’ search mechanism which is servicing the user’s needs.” Now at this stage we still seem to have sufficient differentiation across the top and down the sides and therefore privacy advocates don’t get wildly upset. These services need to be paid for, privacy advocates aren’t anti the private sector, aren’t anti-profit; these things cost money. But there is much bigger concern about whether the sequence within the ‘neutral list,’ once we get away from the sponsored lists, whether the sequence in the ‘neutral list’ really is neutral or whether it’s favouring Google. Of course Google won’t tell you what technique they use. We know, most of us from personal experience of using Google, that with a great many searches that we do, they don’t do too badly when we know there are some links that we want to find and re-find, and we just think Google is the quickest way to get to them; we often find that the things we’re really after are in the first page. So it hasn’t reached the point where Google is manipulating to the point of being totally anti the consumer’s interests—I wouldn’t suggest that for a moment. They have good reason to want to continue to be seen to be doing things for the consumer; otherwise people will drift away from them to competitors. But there is certainly concern about selectivity; we know full well that in China you don’t get the same, you don’t get access to the same things as you do in other countries and that represents a bias that’s been built in at the behest of government or by Google thinking it might be a good idea just to keep the Chinese government happy, that we leave a few things out. So we know that selectivity of content takes place at least in some contexts; we don’t know whether that takes place in other countries because we don’t actually know what their algorithms are.

Jordan Brown: Where do you think they’re heading then?

Roger Clarke: Well they’ve been quite specific about a couple of aspects of their broad corporate strategy because they talk to their shareholders, and they say to their shareholders, or they used to say, “We’re moving towards a Google that knows more about you.” Well that was five years ago. Now we already have a Google that knows a lot more about you. That’s quite an explicit part of their corporate strategy. It’s also quite clear that each new idea that their bright PhD’s come up with only makes it through to even beta let alone full production if it works in with, cross-leverages with, existing services. So if it doesn’t build on, in some way, that’s helpful to Google, then it will only be an oddity, a curiosity sitting on the side for a while and might wander off or be incorporated into some other product later. So that’s a couple of aspects of corporate strategy. There’s nothing unusual or evil about that aspect—that’s normal, corporations do that, it’s what corporations must do in order to have a business, sustain a business and make profits for their shareholders. But when a company has substantial control over important things like information and access to information, it’s a legitimate public concern that they might turn, they might come to abuse that power.

Jordan Brown: So I might ask you—is Google ‘Big Brother’?

Roger Clarke: The concept of Big Brother is of course been rather undermined in recent years by a television program, but the original concept of Big Brother has to do with a State which gains power over individuals, and forms their opinions for them, and places serious constraints on their freedoms. Now Google is a corporation, it’s a corporation that has substantial control over advertising channels, and over discovery channels for information. The fact is at this stage, up until now, we are still getting access to more information more easily than we ever did before. So we haven’t reached the flex point where we have been or have had our freedoms cancelled out; the extent of manipulation of our thinking, of our choice is still quite limited. We haven’t reached the point where the alarm bells have got to be rung and revolution is needed, we may hopefully be a long way from that, by having discussions like this and thinking our way through and standing up against some of the bad things Google is doing. Society is holding them off. So it would be a real exaggeration to say, “Big Brother has arrived and his name is Google,” that would be right over the top at this stage. What’s clear is, however, that Google is doing a number of things that lead in the direction of that kind of manipulation. Now one of the big things we’ve got to understand is that corporations do this, corporations have to do this. As a company director and a company chair which I have been and am in various forms, it is my obligation when I’m acting as a company director to act for the benefit of the company. The company is an abstract notion, you think of it as being the shareholders, it’s slightly more complicated than that—it’s the company of shareholders. You must, you have a legal obligation to act on their behalf, and act for their benefit. So should we do nice things for consumers and citizens? Well the answer is, at law you can’t unless it is ultimately for the benefit of the company. Now donations, sponsorships, can you do that as a company director? Can you approve those things? Well yes, but only because they are for the benefit of the company; because they will get exposure for the company and its brand, and its sub brands; because it will associate those brands with something positive in the public eye—you could only do sponsorship and donations for those sorts of reasons. So when we have these stupid discussions, as people do in the media, about “do no evil, Google doesn’t do evil,” it’s a pile of absolute nonsense, and the media is culpable for swallowing the stuff that is pushed to it by Google. If it is in the company’s interests to do evil, then it is the director’s obligations to do so. Now bear in mind there are constraints—if the law says it’s not just evil, it’s criminal, then the director is required to not do it by law and therefore he can say sitting the board meeting, “We can’t do that, it’s illegal, it would be in the company’s interest, but we can’t do it.” So if the law places specific constraints and draws the line in the sand, then the directors get on and work within the law—by-and-large—certainly this director does, but most directors do because it’s not worth working outside the law, because there’s plenty of scope to make profit inside the law. So it is the obligation of the law to place the constraints on directors. So presumptions that Google doesn’t do evil are nonsense. Google must do such evil as is not illegal evil, in order to advantage the corporation. Consumers are customers, consumers are there to be used—that’s how business works, and privacy advocates and freedom advocates are silly if they don’t reflect that. That is the way our world works in the mixed market economy that we’re in. So what we have to do is have appropriate laws in place, and we’re lacking a lot of those; we have to have appropriate countervailing power against dominant corporations and that means a combination of competitors getting their act together and competing effectively; and it means well-informed consumers who are pushing back the silly things that corporations like Google sometimes do.

Roger Clarke: When a person signs up to use Gmail—and I don’t have deep personal express of this ‘cause there’s no way in the wide world that I’d touch it—when a person signs up as a subscriber they accept the set terms and conditions. Google wrote them, you don’t get to negotiate on them. And almost nobody understands those terms and that includes the people who’ve tried to study them and understand what they really mean. It’s pretty likely that the authority that you signed across to Google includes the ability for them to use the contents of that mail however they choose to, and to keep it for as long as they like, and to interrelate it with anything they’d like to interrelate it to, ever. And by ‘freedom to use it,’ I mean freedom to disclose it, freedom to exchange it, with other organisations. Now look, it’s a moving target, and I haven’t looked at the Gmail conditions since soon after the Gmail…twice, at the beginning when Google first announced Gmail and then over a year subsequently—I haven’t looked at the current terms. Do people who use Gmail understand that? And I suspect that many of them don’t. Do people who use Gmail realise that not on their disk drive, but on somebody else’s disk drive, the corporation that operates for profit; everything that they’ve done in their mail, all of the exposures that they’ve have had, all the interests and the behaviour and the attitudes; are there arguably for all time. Now, in privacy we use expressions like, “There will always be another bigotry.” That is to say, you don’t know what in some future environment you will be accused of being—possibly being in favour of heterosexuality will one day be a bad thing; having been a member of a particular church which has become discredited could become a bad thing which could be a black mark, which could result in you not being able to, for example, join the public service. Those sorts of things have happened in the past, and they’ve happened in many countries including Australia. Do we really want to, as Gmail subscribers or email subscribers through any service, to be gifting that kind of history forever, to a corporation? Now of course, those people who use Facebook, and put stuff on Facebook that invades their own privacy, embarrasses them when they apply for jobs three years later, and indeed embarrasses their friends when their friends apply for jobs three years later; the people who do those kinds of things on Facebook might well say, “So what?” I think in three years’ time or five years’ time or 15 years’ time, those people are going to look at it differently. They’re going to be saying, “But surely you’re not going to take notice of that rubbish? I was off my face when I was young, aren’t people supposed to get off their face when their young?” That’s the attitude that many of us have. Young people are always more happier with risks than older people are and that’s the same person—the older one is more risk averse than the younger one was. Yes, I and many of my friends before I had a grey beard did lots of stupid things. I had the advantage that they were only visible in a limited space because the ability to film things was nothing like then what it is now; and they were not only limited in space, they were limited in time, because they weren’t recorded and they weren’t subsequently available to anybody except by word of mouth. So I’m protected—my youth is alright; all the things I did that were stupid in my youth aren’t up there on Facebook, and won’t be; and they aren’t in Gmail. But the current generation are facing a different thing. They’re facing capture and recording and retention in ways that we think is quite inappropriate, it runs quite against the grain for humans. So Gmail has some real problems for the people who subscribe to it. My concern personally now, not as an advocate, is that I don’t get the choice. If I converse with somebody at a Gmail account, my email goes into that archive and gets gifted by the other subscriber, by the recipient, not by me, gets gifted by the recipient despite the fact that in a sense it’s my email—I find that a major problem. I sent that email to that person that for that person’s purposes (obviously all the addressees; and if I sent it to an e-list, then to the members of that list). I didn’t send it into that great big archive in the sky to be used as that great big archive’s owner sees fit, forever. So I think the rest of us have got a major problem. And bear in mind, this is hidden. If you or I send an email to a list which has an unknown number of subscribers but many lists have got 200, 500, a 1000 subscribers—if a single one of those subscribers is a Gmail account user, my posting to that list is not limited to that list, it goes into Google’s archives. Yet another variant. If I email to somebody at an account that is not a Gmail account, and if that person uses Gmail as their personal repository and flushes all their mail into Gmail, then unbeknownst to me I’ve sent that letter into an environment in which it will end up in Google’s archive forever, for whatever Google wants to use it for. I find that absolutely inappropriate because it means that the privacy of individuals who aren’t subscribers has been invaded; and the freedom of speech is now being constrained, because I’m now thinking when I compose an email, “Oh I wonder if the recipient unbeknownst to me is flushing that through a Gmail account.” And it constrains the freedom of speech which reduces the human interaction that we thought we were getting, the freedom to interact that we thought the Internet was bringing to us. Those are the sorts of things I mean by a dark ages. Once people start self-censoring, once people talk differently because they think they might be being observed, we’ve had our freedoms constrained. This is freedoms constraint not by parliament which says, “No no no no, naughty person, you mustn’t do this, it’s unfair to other people”—not by a parliament, this is freedoms constrained by a corporation because of the way in which that corporation does business. Now every single one of those points was made to Google about Gmail in 2004 before they launched it. A group of us, I think there were 30 of 40 signatories from all over the world sent a letter to Google explaining why they should not be launching a service with these features. We’re not saying they shouldn’t launch all sorts of features of services, including ones different from Hotmail and Yahoo!—we’re not saying that at all—we’re saying these particular features have got really serious consequences for humans and you should not be doing this, it is a very bad public policy move; and of course we sent similar letters to regulators who failed to act, and parliament has never done anything to get this under control. European Data Protection commissioners argue about whether two years or 18 months is a good time for Google and other operators to be permitted to keep logs—absolute nonsense, absolute failure of the regulators to have meaningful conversations. They should’ve simply said, “As soon as the purpose has expired, we think 18 seconds is pretty good, tell us if you think more than 18 seconds is necessary.” That’s what they should’ve done, that’s what they haven’t done. So we’ve had very dangerous play for humankind by Google and failure by regulators, and therefore the public has got to act. The public has got to learn about what Gmail is doing their and other people’s mail, and the public has got to get upset about it.

Jordan Brown: Google’s stated mission is to “organise the world’s information and make it universally accessible and useful.” What do you think that means in terms of privacy?

Roger Clarke: The mission statement, like all mission statements, sounds great; until you take it down into fine detail and try and work out, well where will that lead us to? There’s a great deal of information about humans that you don’t want to have widely available, that you want to provide particular protections for. We hear this nonsense about, “the only people are concerned about privacy are people with something to hide.” Well, yes. How about your password? How about your pin? How about various aspects of your physical person? How about various aspects of your health? Various aspects of your finances? The fact that you’ve got a really really valuable painting in a house that is really easy to break into and that doesn’t have a security system? How about the way your kids go to and from school? What your daughter drinks, and which drink to spike? There’s any number of things that people have to hide. There are a great many things that individuals are ill-advised to put into a public space. You might want to write them in your little black book to remember them, but you shouldn’t be, you are badly advised—it creates insecurities for yourself if you let other people know them. That’s not reflected in that mission statement. Now to be fair, when you’re trying to have a mission statement, you want it nice and short and punchy. So I’m not going to object to their mission statement, but some of the things that they do following on from that, don’t reflect the realities, don’t reflect the constraints that human beings want to place on an organisation that organises the world’s information. So, a lot of those things are great—freedom of access to government information; more information about what businesses do; more information about what politicians do and what votes they go for, and who they have lunch with—all of those sorts of things, good stuff; because they’re in the public interest for that to be known. There’s a great deal of enormous benefit in what Google is doing, and the other search engine providers are doing. But there have to be constraints. There are things, aspects of information that need to be handled with much greater care.

Jordan Brown: “Privacy and security, do they go hand in hand?” Do you want to comment on that?

Roger Clarke: Yeah, it’s always a complicated question—privacy and security. There’s a couple of always of looking at it. The first one is, that security is about ‘one twelfth of privacy’ in the sense that—if we have sensitive data such as our healthcare data which is locked in a doctor’s surgery, then we don’t want it escaping from our doctor’s surgery; so security is our friend (so it’s about a twelfth of privacy, because there’s about eleven other principles depending on how you wrote the principles). Another way of looking at it is that security—in the sense of national security particularly—is an enemy of privacy because national security people say, “Well, the only way that we can protect the public against nasty people is to know everything about everybody.” And clearly that’s a nasty breach of the expectations we have. “Finding balances” is what the game is about. So security and privacy have relationships and overlaps, and they also have some circumstances in which they’re head-to-head competitors. Another example that’s quite a challenging one is, we say that individuals must be able to get access to data about themselves. Very important principle. Particularly when it’s sensitive data, how do we know that the person giving the data to is the right person? So there does the corporation or the government agency say, “Yeah, I’ll give you this data but I need to be pretty confident that it really is you that I’m giving it to.” What do they do? Well, they’ve got to invade the person’s privacy; they’ve got to have enough information from that person in order to satisfy themselves at a level of competence commensurate with the sensitivity of the data. We say that as privacy advocates. So we’re setting them a privacy puzzle, a privacy and security balance puzzle. That’s the world; the world isn’t simple; privacy advocates understand these things, and we face up to those balances all the time; and we’re not saying it’s particularly easy for Google to find appropriate balances in all of the things it does. We’re saying that it’s got to look and find balances, and in doing so, it’s got to appreciate what the public interest is and what the public’s concerns are. To do that, it’s got to engage with the public, and with people who know about these things, representatives of the public and advocates for the public interest. So our concern here is that Google hasn’t learnt, and at the moment is still trying to avoid learning.

Jordan Brown: What do you say to those people—because most people seem to ‘debunk’ this whole argument of privacy with, “Oh, if you’ve got nothing to hide, you’ve got nothing to fear.” What do you say to that?

Roger Clarke: Firstly I get really annoyed about it ‘cause I hear it so often in most interviews. I say things like, well the Jews who lived in Holland in 1939 had nothing to fear from a database that identified them as being Jews. Well they don’t have anything to fear now ‘cause they’re all dead, with very few exceptions. The people who had reasonable educational qualifications in Cambodia in the 70s had nothing to fear—what’s there to fear about knowing that you’ve got a certificate of an advanced diploma? Well most of them are dead too. That’s why I say there will always be another bigotry. You don’t know what it’s going to be. In Rwanda/Burundi it was being thought to be of a particular ethnic background. Now it’s not terribly easy for people to tell ethic backgrounds when they’re tribal and their adjacent and they’ve been adjacent for hundreds and even thousands of years, but the decision was made that, you were of that ethnic persuasion then you were dead. Now these are just the sharp end, where the worst case of invasion of privacy occurs and you get killed. There’s lots and lots of circumstances where obscure bits of information do harm the people that somewhat less than killing them. Misinterpretation of, “Oh you’ve got a criminal record, we can’t hire anybody with a criminal record.” Well, you’ve got to work out what that was for. There have been people who had a criminal record because they were demonstrators against the Vietnam War or they were demonstrators against apartheid in South Africa, who once it has become apparent to the employer why they had a criminal record, they were instantly hired. You’ve got understand the full background of the circumstances. There’s tests of relevance, there’s tests of accuracy, precision and so on. Now, information is complex and delicate and its use is complex and delicate. So when we look at personal information and we look at the use of personal data and the interpretation of personal data, we’ve got to be very very careful. Everybody has got something to hide. And everybody will discover in the coming years—5, 10 and 15 years from now—that some of the things they didn’t think they needed to hide would’ve been better off hidden, because they’ve suffered because of that information’s availability. It’s a delicate flower; we’ve got to be careful with people.

* * *

END OF TRANSCRIPT